I was recently in NYC. It was one of the most interesting experiences I’ve ever had.

Each one has their own perspectives when visiting the city, and I’ve asked several people about theirs before the trip, which helped immensely when planning out my itinerary. Now it’s my time to pay it forward. Here is my guide / personal perspective of New York.

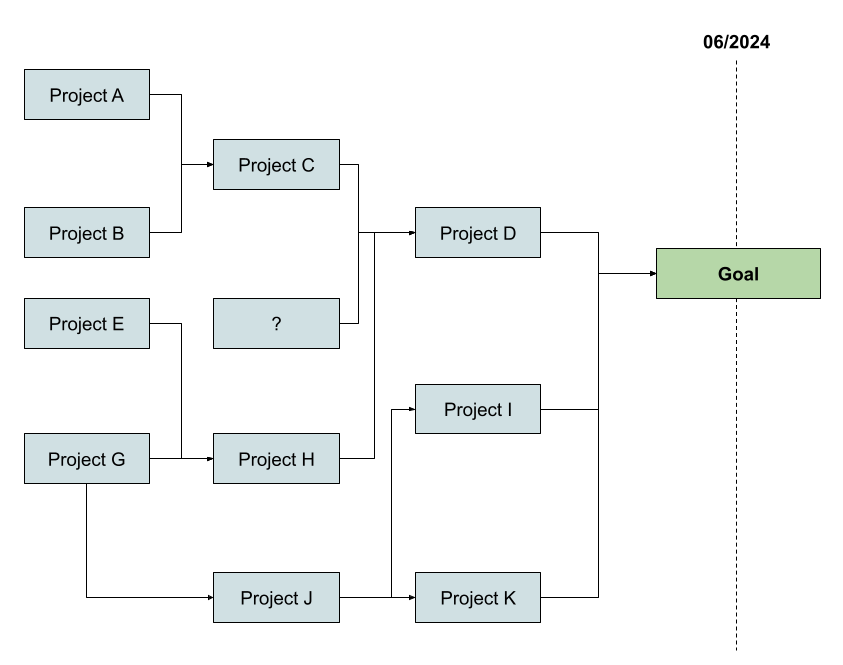

Maps

Having a pre-curated list of points of interest on Google Maps was one of the most useful and time efficient aspects of the entire trip. This allowed me to quickly improvise and make the most of my surroundings, since I could just look at the map to check which nearby places I could visit at any given time.

Museums

I didn’t consider myself to be an excessive photographer. I’ve discovered on this trip that I was probably wrong. I can’t recall the last time I took so many photos, it was like I was in an outstanding candy store of visual goodies.

Intrepid Museum

Let’s start with fashion. I’ve been pointed out that my glasses make me look nerdy / square / too serious / too much like an engineer. Well, thanks to this museum piece, I can now say they were developed for space walks:

Oh, and look back and you will also see the Space Shuttle Enterprise, the first orbiter of the Space Shuttle system:

You can find these glasses, the space shuttle, sit inside the Mercury capsule or A-6 Intruder cockpit, see real life jet planes like the Lockheed A-12, in the Intrepid museum.

Where is the Intrepid museum? The museum is an World War II–era aircraft carrier. Yes, the vessel is the museum. You can explore several of it’s interior sections, including the bridge, living quarters, gun and bomb bays, go through the same narrow escalator that pilots took to the flight deck, all of these throughout several floors and different explorable rooms

Right beside the Intrepid lies USS Growler, a diesel powered submarine retrofitted to deliver Regulus nuclear missiles, as a form of nuclear deterrence during Cold War. You can go inside the submarine and get an intimate experience of how it’s lived throughout their 2 month patrols, where they faced the constant scenario of being called upon to launch a nuclear missile onto Russian soil, destroying not only their target, but most likely kill themselves on the process, since the process to launching / reloading Regulus missiles was long and would expose this submarine to enemy reconnaissance.

- Since it was a diesel submarine, it required to be at surface or near surface level (it was not possible to push out their exhaust gasses when deep in the ocean) to enable a set of its 3 engines to charge its on-board batteries that would power the submarine and its electric motors.

- It also needed to be periodically refueled, so the submarine ran a long circular patrol in the Pacific Ocean to refuel to eventually sit near Russian enemy lines for dozens of days.

- The crew had to maintain absolute secrecy about their mission, and none of their partners knew the context of it

- The families of the crew members knew nothing about their mission, and that if they did launch a nuclear missile, they would be exposed, and most likely be killed as they expose themselves to launch the nuclear load (not to mention the annihilation that comes from the nuclear warhead)

I found the underlying story and details of this submarine and this mission to be so interesting, that right after the tour, I went immediately in search of staff members to ask a series of questions that came about during the visit. They very kindly and patiently took the time to answer them, and one of the ladies even asked me if I was an engineer. I’m not sure what gave that away. It was either the questions, or my glasses :)

MET

MET, or Metropolitan Museum of Art, has two locations: the museum in Fifth Avenue is the most famous one and is often referred to when mentioning MET. The other lesser known branch is in Upper Manhattan, the MET Cloisters.

MET: Fifth Avenue

- If you like the British Museum, you will love MET on Fifth Avenue

- They reconstructed the 10 BCE Temple of Dendur Roman Egyptian religious structure, which was originally located in Tuzis (later Dendur). Must I say more?

- This massive museum spans through classical antiquity and ancient Egypt; paintings and sculptures from nearly all the European masters; and an extensive collection of American and modern art. All of these are intertwined with large thematic rooms where the installations are beautiful to behold. If you are going to see a single museum in New York, this should be the one.

One of the last books I’ve listen on audiobook was Walter Isaacson’s Benjamin Franklin biography, where one of passages goes through the story of how a Duplessis’ painting of Franklyn’s portrait came to be. This 1778 painting can be seen in the museum, and curiously enough, Franklyn’s depiction on $100 bills from 1914 to 1990 had him wearing a fur coat, just like this painting.

MET: Cloisters

With the same ticket, one can visit the Cloisters and the 5th Avenue museum on the same day. The Cloisters museum is America’s only museum dedicated exclusively to the art and architecture of the Middle Ages, and is smaller than the 5th Avenue one. Still there much to explore not only inside the museum, but also on its surroundings

Surrounding the Cloisters is the beautiful Fort Tryon Park and it’s Heather Garden, sitting right next to the Hudson Riven. A bliss to behold.

National History Museum

- I lost count of the amount of dinosaur skeletons I’ve seen in the natural history museum. And nothing quite prepares you to see a gigantic skeleton of a Titanosaur. It’s so big that its head extends outside of its new home in the Museum’s fourth-floor gallery.

- I’ve learned while browsing the museum about mass dinosaur graves and their relationship to draughts, and went through thought provoking sections, such as the presentation of a massive turtle specimen, that if it were to be extinct it would probably be right part of our imaginary and event movies.

Another thought that stuck to me is how deeply connected we are to other animals, Earth and outer space. Seeing life sized representations of several of these makes them immediately more relatable.

Guggenheim

On Saturdays, from 5 to 8 pm, admission to the museum is “Pay What You Wish”, for a minimum of $1. I would recommend doing so, since the museum can be easily visited in less than one hour, and in my opinion, it’s more about the building than the pieces themselves

Entertainment

Broadway

Broadway shows are a hallmark of NYC, although if you are from London or Europe, I would recommend not seeing shows that are already available in London. For example, I’ve heard that Hamilton is better seen in London, due to the larger contextual part that King George has in the UK.

The Chicago musical is a good bet, and is the one I’ve attended to, it is the second longest-running show ever to run on Broadway, behind only The Phantom of the Opera

Comedy

Comedy Cellar is a comedy club in Manhattan where many top New York comedians perform, and where several comedians started, like Louis C.K. or Dave Chappelle. I found it be have down to earth, edgy comedy, and you are asked to leave your phone in a pouch, which I believe to increase presence of the entire crowd, but also provides extra freedom to comedians, given to how powerful cancel culture can be

Points of Interest

Statue of Liberty

You can get the Staten Island Ferry from the Whitehall terminal, for free. On your way to the terminal, several people will try to sell and cajole you into payed trips to the Statue of Liberty trips. The ferry is more than enough.

- The ferry runs every 30 minutes, 24 hours per day, for most of the days, and travel time is approximately 25 minutes.

- Once you get to Staten Island, you can either take some time to explore it, or just return immediately to Manhattan, by exiting the ferry, by entering St. George’s Ferry Terminal, and entering the boarding gates for the next ferry.

- When entering the Whitehall terminal, enter through the right side of the ferry in order to be on the same side that the Statue of Liberty will appear. If entering on St. George’s Ferry Terminal, enter through the left side of the ferry. Regardless, you can easily change sides when inside the ferry

- Most of the people will likely go to the top floor with larger windows, which will eventually get crowded. I recommend instead to take the bottom floor, and find a window that is already opened. The view is much clearer (since you don’t have a worn down piece of glass obfuscating the view), you can sit comfortably near the window, and you won’t need to fight a crowd (in principle)

Central Park

Central Park is an urban park between the Upper West Side and Upper East Side neighborhoods of Manhattan in New York City that was the first landscaped park in the United States. It is the sixth-largest park in the city, and it’s a great way to step out of the busyness of the city and discover the treasures that lie in the park.

High Line and Little Island

The High Line is a 1.45-mile-long elevated linear park, greenway and rail trail created on a former New York Central Railroad spur on the west side of Manhattan in New York City. If starting it from the north side, you can enter it near Hudson Yards, and walk your way south from there.

Upon the southern end of the High Line park, you can view Little Island, and quickly walk to it. Little Island is an artificial island park, with some beautiful views and interesting layout

Financial District

9/11 Memorial

The memorial is located at the World Trade Center site, the former location of the Twin Towers that were destroyed during the September 11 attacks. Each of the towers’ footprints are now the home of two large, recessed pools. The sheer dimension of these is awestrucking. The entire site is filled with symbolism.

Wall Street

- On the street itself, we can find the New York Stock Exchange, the largest stock exchange in the world, which for decades filled the popular imagination with its hustle, bustle and hectic traders. Those same brokers and traders are now surrounded by computers that manage the majority of the buying and selling of stocks for their various accounts. Floor trading still exists, but it is responsible for a rapidly diminishing share of market activity.

- The Charging Bull of Wall Street is not really located on Wall Street itself, but it is quite near. The bull in finance represents optimism and growth. The statue on Wall Street represents the same ideas.

- The Federal Hall is a historic building, whose original building served as New York’s first City Hall and hosted the 1765 Stamp Act Congress before the American Revolution. With the establishment of the United States federal government in 1789, it was renamed Federal Hall, as it hosted the 1st Congress and was where George Washington was sworn in as the nation’s first president. The current structure was built as the U.S. Custom House for the Port of New York before serving as a Subtreasury building from 1862 to 1925, and the current national memorial commemorates the historic events that occurred at the previous structure.

Columbia University + Morningside Park

Walking west from Central Harlem, where you can find the Apollo Theatre for example, we reach Morningside Park, from which we can climb up towards Columbia university, a private Ivy League research university in New York City.

Grant’s Tomb

- In a mere 7 minute walk from Columbia University, you can reach the General Grant National Memorial (Grant’s Tomb), classical domed mausoleum, the resting place of an American military officer and politician who served as the 18th president of the United States from 1869 to 1877. The underlying background music and the attention to detail are something to behold

- Right beside it sits the soaring Riverside Church, all of this surrounded by the peaceful Riverside Park

Citigroup Center

Due to material changes during construction, the building as initially completed was structurally unsound. To save money, Bethlehem Steel changed the plans in 1974 to use bolted joints, and wind loads were calculated from perpendicular winds, as required under the building code; in typical buildings, loads from quartering winds at the corners would be less.

In June 1978, after an inquiry from an engineering student, the structural engineer recalculated the wind loads on the building with quartering winds, and found these to significantly increase the load at the bolted joints, and that a wind capable of toppling Citicorp Center would occur every 55 years on average.

Starting in August 1978, construction crews covertly fixed the issue, and six weeks into the work, a major storm was off Cape Hatteras and heading for New York. The reinforcement was only half-finished, with New York City hours away from emergency evacuation. The storm eventually turned eastward and veered out to sea. The repairs were finished successfully, and no major issues happened ever since.

The Woolworth Building

Completed in 1912, the Woolworth Building was the tallest building in the world from 1913 to 1930, with a height of 241 meters. It blows my mind that such a tall building was built more than 100 years ago.

Billionaires’ Row

Home of one of the most expensive apartments in the world, the Billionaires’ Row hosts a group of ultra-luxury residential skyscrapers. Several of them are so thin that it’s hard to believe how they can stand upright without toppling over.

Lincoln Center

- The Lincoln Center is a complex of buildings in the Lincoln Square neighborhood on the Upper West Side of Manhattan, where the beautiful Metropolitan Opera House can found.

- It houses internationally renowned performing arts organizations including the New York Philharmonic, the Metropolitan Opera, the New York City Ballet, the Chamber Music Society of Lincoln Center, and the Juilliard School.

Grand Central Terminal

- Grand Central Terminal (aka Grand Central Station or Grand Central), is a commuter rail terminal located right in the center of the city. So even if you don’t stumble upon it during your commute, it can still be easily reachable via public transportation.

- It has been the subject, inspiration, or setting for literature, television and radio episodes, and films, and every year the MTA hosts about 25 large-scale and hundreds of smaller or amateur film and television productions.

The Bronx

The Joker Stairs

The first picture on this note was taken at the “Joker Stairs”, which is the colloquial name for a step street connecting Shakespeare and Anderson avenues at West 167th Street in the Highbridge neighborhood. The stairs are quite different from when Joaquin Phoenix danced as the Joker. They are more colorful, and have a construction site ongoing at the top.

The Birthplace of Hip Hop

DJ Kool Herc is credited with helping to start hip hop and rap music at a house concert at 1520 Sedgwick Avenue, which is currently covered by scaffolds as seen here. I would recommend to go during daylight hours and to be attentive to your route, as it gets rougher on the way there.

Yankee Stadium

The stadium is the home field for the New York Yankees and New York City FC, and also has some small surrounding parks.

Brooklyn

Williamsburg (Bridge)

While the Brooklyn Bridge stands as the most famous bridge to cross the East River, you can easily dodge its flocking crowds by crossing the Williamsburg Bridge, which leads you into Williamsbridge, characterized by a contemporary art scene, hipster culture, and vibrant nightlife that has projected its image internationally as a “Little Berlin”

You can find several interesting places in Williamsburg such as the Domino Park, Spoonbill & Sugartown Books and the City Reliquary Museum.

Brooklyn Bridge

The Brooklyn Bridge is an iconic landmark of NYC, which you can cross from Manhattan towards Brooklyn. Be advised that this crossing is often quite crowded, and there are several vendors in the bridge itself

Dumbo & Brooklyn Heights

Once you cross the Brooklyn Bridge, you can head directly to Dumbo, where you have a beautiful view to the Manhattan Bridge

You can also have several scenic views towards of the Brooklyn Bridge and Manhattan, in both Dumbo and Brooklyn Heights

Brooklyn Museum & Brooklyn Library

- The Brooklyn Museum is New York City’s second largest and contains an art collection with around 500,000 objects, and is fairly easy to access from the subway. The breadth and depth of objects at the Brooklyn Museum is best compared to the Met, boasting strong departments of both Western and non-Western holdings. I didn’t fit enough time to visit the actual museum, but it is definitely on the todo list in case I visit the city again.

- Brooklyn’s Library four stories high main entrance is a marvel to behold just by itself

Prospect Park

Prospect Park is an expansive and peaceful urban park with beautiful landmarks and structures, such as the Boathouse on the Lullwater, several watercourses, bridges, monuments and statues

5th Avenue

Rockefeller Center Christmas Tree

The Rockefeller Center Christmas Tree has been a yearly tradition ever since 1931, which now hosts a 20m+ Norway spruce, near to an also historical skating rink, which was opened below the tree in the plaza in 1936. This year, you’ll be able to see the tree up until January 13th 2024.

Saks

- Directly facing the Rockefeller Center, you can find Saks Fifth Avenue, an American luxury department store founded in 1867.

- In partnership with Dior, as part of Saks’ annual holiday, it is hosting a massive, bronze circle is broken up into eight sections with a deep blue center decorated with all the zodiac signs and a spray of stars, with one big star in the middle, where there is a periodic visual and sound show which is truly impressive.

Trump Tower

Just a few blocks north from the above places lies the Trump Tower, where Donald Trump descended on an escalator to announce his candidacy for president, the first step on a journey few believed would take him all the way to the White House.

Eat, Sleep, Move and Pay

Where to Eat

- Unless you would be aiming to hit a specific high demand restaurant at a specific time span, there is limited need to pre-book a restaurant, since you can just walk for a few minutes and find other quality restaurants.

- There are several food stalls around NYC, many of which you will be able to recognize by their LED letterings and smell of burnt meat, I would personally avoid these. There are food stalls which are incredible though. One of these is Shawarma Bay, just a block away from Radio City Music Hall. Incredible food, although it can gain quite a queue, which can take about 30 minutes, as it was my case. Worth the wait though– cops were eating there

- You can get incredibly good Deli Sandwiches in NYC, in places such as Sunny & Annie’s Deli. I’ve personally tried one at Gold Deli, and it was seriously good.

- Are you in Times Square and want to grab a bite? Walk 2 blocks west and you will find yourself in Hell’s Kitchen, packed with good restaurants, without much less hassle

- Wan Wan Thai restaurant was the best dining experience I had in NYC, for an acceptable price.

- Joe’s Pizza offers traditional New York slice-style pizza at a fair price, that has made a dent in popular culture. Definitely worth the it.

- Want to grab quick, healthy, tasty bite? Dig Inn has you covered.

- Williamsburg’s L’Industrie Pizzeria apparently has some of the best pizzas in NYC, but I can’t attest to that, given there was a massive queue when I arrived there. So I moved to Rosa’s Pizza, where I had delicious slice.

- On markets such as the Chelsea Market you can find several good food places.

- Fraunces Tavern is a museum and restaurant that played a prominent role in history before, during, and after the American Revolution. At various points in its history, Fraunces Tavern served as a headquarters for George Washington, a venue for peace negotiations with the British, and housing federal offices in the Early Republic.

Where to Stay

- This all depends on your budget, activities you would like to do, who is going with you, etc. But in general, I would advise on staying in Manhattan island, or at least near to a transport connection that can get you quickly to the city center, since it is where most of the points of interest are located, and transport links are quite good from there. Most likely you will also be in your lodging to sleep, and not much else.

- If looking for a hostel, HI New York City Hostel is a good one. It even sports free weekly comedy sessions every Sunday night. It’s located near Central Park, and you can’t go wrong with this one. In general, you can search through https://www.hostelworld.com/ and book through there, it was quite reliable for the two bookings I did.

- If looking for something more comfortable, citizenM Hotels are of incredible good value and location. Apart from the comfortable rooms that have nice view already, on Bowery it even has rooftop terrace with an amazing view of the city

Money / How to Pay

- Stores, food establishments (even chains), bodegas / delis, 7-11s, etc present their prices as pre-tax. New York City sales tax ranges from 4% to 8.875%, and some products are exempt from it.

- Be prepared to tip everywhere. On restaurants it is customary to tip 20%. There is some debate about whether you should calculate the tip on the amount before or after tax.

- You don’t really need cash. You do need it if you want to buy from street vendors, or if you want to dodge the additional cost that certain sellers impose on card transactions (like the shawarma above)

- On restaurants and entertainment venues, it is common practice for the attendant to leave with the credit card, to later return with an attached invoice that is meant to be signed and filled with the tipping amount.

- It also happened to me that when trying to pay by contactless card to a market vendor, the card kept being rejected, and since the card insertion feature was not available, the vendor wrote down the card number and details into the system to proceed with the payment. That failed as well, because the card got blocked by my bank due to a false-positive suspected fraud, as I later discovered. Eventually I just payed with cash to resolve the issue.

- As an European, the practice of being physically separated from the card, verification via signature, and freely writing down credit card numbers strike me as quite unsecure.

Getting Around / Transportation

- Walking is one of the best ways to discover the city. Sidewalks are broad, the city is grid shaped (so expect to be given coordinates in the form of street / avenue to pinpoint a place), and pedestrian lights and timings ran standard everywhere I visited. The large majority of my city exploration was made over the span of 180km, throughout 4 days. Extra tip: if you are used to barefoot shoes, wearing firm ground outsole Vivos to do so is amazing. My feet were sore, but happy.

- Walking through the perpendicular numbered streets and avenues is an absolute bliss, and makes it quite easy to navigate through them. Several times I just looked at the map and quickly memorized something like “3 right, 2 left”, which translated to “cross 3 roads and turn right, and then cross 2 roads and turn left”. This would keep me walking going for a long time and have time to appreciate the surroundings, without the need to constantly check the map.

- Ferry boats are a great way to sight the city, for a low price ($4 for a one way-ticket), and you can buy these tickets through the NYC Ferry app or a ticket vending machine.

- Subway and Bus now have OMNI, which just means that you can tap in with your credit / debit card. Each ride costs $2.90, and there are no different prices for different zones. This has a [rolling 7 day price cap] (https://omny.info/fares), so you never pay more than $34 over that period.

- Metro Card is the pre-paid version of this. If you need one, buy it directly from the machines to avoid being scammed. For example, a 7-Day Unlimited MetroCard costs $34 upfront, and can be used any number of times during those 7 days.

- Be aware that if you swipe in, then get out from the station, and then attempt to shortly swipe in again, it will reject your swipe, due to being too recent. If that happens, ask a MTA attendant to let you in (if available), or do like many others and wait for someone to crack open the emergency exit from the inside. If you hear an alarm going off in the station, most likely it is because someone opened the emergency exit to let others in.

- Just like routine events such as figuring out post-tax prices purchases or how much and when to tip require some kind of mental effort, figuring out the different edge cases of the subway system will keep your mind busy. It is like there is a perpetual rat race game being played in the city.

- Sometimes trains stop at all stations, sometimes they don’t, sometimes trains have indicators of which station you are in, sometimes they don’t, sometimes the station has (non-standard) indications of how much time it takes for the next train to arrive, sometimes they don’t. Sometimes it rains heavily inside the stations, sometimes it doesn’t.

Other Notes

- Something unexpected to me, which cannot be transmitted through visual media, is the collection of smells around New York, in almost all parts of the city, except a set of parks and neighborhoods mostly outside Manhattan. The smell of garbage (since it is comment that it accumulates in heaps of bags on city sidewalks), burnt street meat, horse manure in some parts of Central Park, a smell I can only describe as pervasive detergent / laundry smell that could even be detected inside several buildings like the MET and Delis; the strong smell of road paint (since it appears that several of the streets were recently repainted), and signature smells from the subway

- Several subway lines are relatively shallow, so they are easily heard at street level. They also generally run below the roads themselves, which I would assume to make it easier when building incredibly high skyscrapers whose foundations run quite deep.

- Be attentive of your surroundings and where you walk, such dodging through garbage debris and sidewalk cellar doors, be them open or closed

- Be prepared to encounter people who are not as well off, and might be living in the streets. Use your judgment, keep out of confusions, be respectable and don’t engage with people who have serious mental issues. If you are in a rougher area, memorize your path when possible, and stop at a local shop if you need to get your bearings. Use your common sense

- You might have noticed that I didn’t mention the common Edge / Top of the Rock / Summit One / Empire State Building / etc observation decks. It’s not that I don’t enjoy incredible views, but it didn’t fall high enough on the list of priorities, specially because I’ve had the opportunity to see skyline views through other buildings I’ve passed by, such as the Hotel.

- I tend to like long trans-atlantic flights, since they provide a moment of low stimuli, where there is less incentive to use the internet. My personal piece of advice would be to avoid the usual in-flight entertainment. I love to use them to resume a book or to write down my reflections from a trip for example (several of article lines were written during the return flight)

- This only shows my ignorance, but I had no idea that so many well-known artists were originally from New York, like Jay-Z (Brooklyn), Cardi B (South Bronx), Spike Lee (Brooklyn), and of course, Jenny from the block. That explains why I’ve consecutively heard “Empire State of Mind”, from Jay-Z and Alicia Keys (Hell’s Kitchen), and “All I want for Christmas is You”, from Mariah Carey (raised in Huntington).

Closing Thoughts

Ever since I was a kid I had a considerable fascination with the USA. The culture that arrived through the small and big screens, the big companies and entrepreneurs that I admired, the language, the people I’ve met along the way, the stories. Having had the opportunity to visit and experience it first hand was a true privilege. I’ve lived it like it was my last time, and it imprinted in me several thoughts and perspectives that I’m sure to last a lifetime.

New York ain’t no DisneyLand, and Elmo’s pictures in Times Square don’t come for free either, so set expectations accordingly. It can be one of the most fulfilling experiences you’ll ever have. I advise you to come prepared and plan accordingly.

Frank Sinatra sang that he wants “to wake up in the city that doesn’t sleep”. That phrase has a deep meaning that only struck me during my trip. The city does not indeed sleep. The Times Square lights, 24 hour subway, the constant movement. Living in NYC wakes you up, not only literally, but also figuratively. It wakes you up to a different reality that keeps you on your toes in several ways. In a way, it’s a celebration of life.

]]>